KernelBench-Hard: Seven Problems, Twelve Frontier Models, Two Rubric Leaks

A focused successor to KernelBench v3. One GPU, fewer but harder problems, real coding-agent CLIs as the harness, and an explicit decision to publish the rubric leaks rather than iterate until perfect.

Results at a Glance

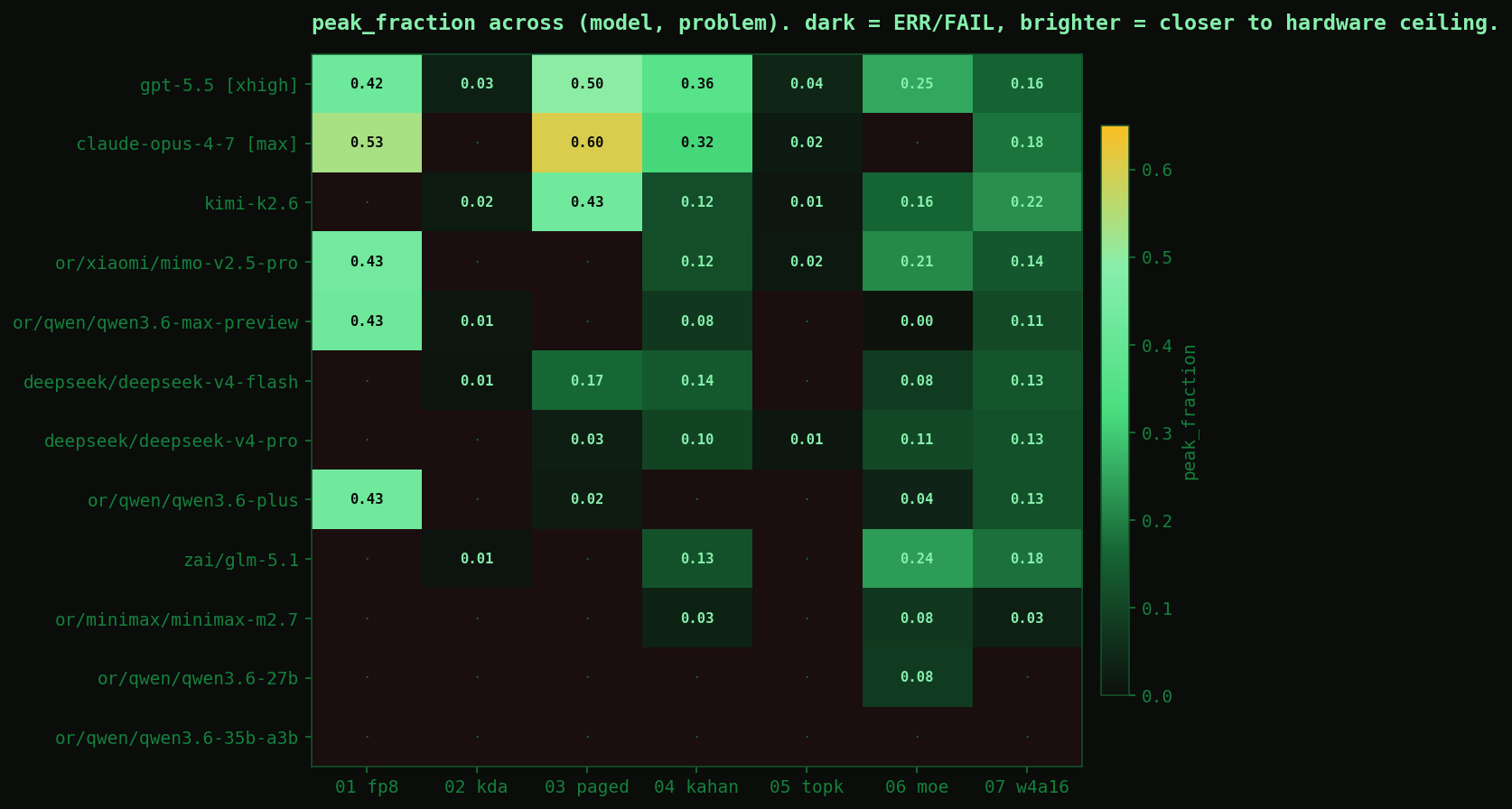

12 models across 7 problems on a single RTX PRO 6000 Blackwell Workstation (sm_120, 96 GB GDDR7, 1.8 TB/s peak DRAM bandwidth). Cells are peak_fraction — the fraction of the relevant tensor-core or memory-bandwidth ceiling the kernel actually achieved. FAIL means a solution was written but missed the correctness gate; ERR means no solution was produced. ★ marks cells that have a per-run annotation in the source repo.

| Model | 01 fp8 | 02 kda | 03 paged | 04 kahan | 05 topk | 06 moe | 07 w4a16 | PASS |

|---|---|---|---|---|---|---|---|---|

| gpt-5.5 [xhigh] | 0.423 ★ | 0.032 | 0.497 | 0.363 ★ | 0.042 | 0.251 | 0.159 | 7/7 |

| claude-opus-4-7 [max] | 0.534 ★ | PASS | 0.602 ★ | 0.317 ★ | 0.020 | FAIL | 0.184 | 6/7 |

| kimi-k2.6 | FAIL | 0.022 | 0.432 | 0.118 ★ | 0.014 | 0.161 | 0.220 | 6/7 |

| mimo-v2.5-pro | 0.434 ★ | FAIL | ERR | 0.121 ★ | 0.017 | 0.211 | 0.137 | 5/7 |

| qwen3.6-max-preview | 0.429 ★ | 0.011 | ERR | 0.077 | FAIL | 0.004 | 0.110 | 5/7 |

| deepseek-v4-flash | FAIL | 0.009 | 0.167 | 0.138 ★ | FAIL | 0.083 | 0.134 | 5/7 |

| deepseek-v4-pro | FAIL | FAIL | 0.027 | 0.101 ★ | 0.011 | 0.108 | 0.125 | 5/7 |

| qwen3.6-plus | 0.431 ★ | ERR | 0.022 | ERR | FAIL | 0.040 | 0.125 | 4/7 |

| glm-5.1 | FAIL | 0.005 | ERR | 0.125 ★ | ERR | 0.238 | 0.180 | 4/7 |

| minimax-m2.7 | ERR | ERR | FAIL | 0.034 | FAIL | 0.076 | 0.030 | 3/7 |

| qwen3.6-27b | ERR | FAIL | FAIL | ERR | FAIL | 0.082 | ERR | 1/7 |

| qwen3.6-35b-a3b | ERR | ERR | ERR | ERR | ERR | ERR | ERR | 0/7 |

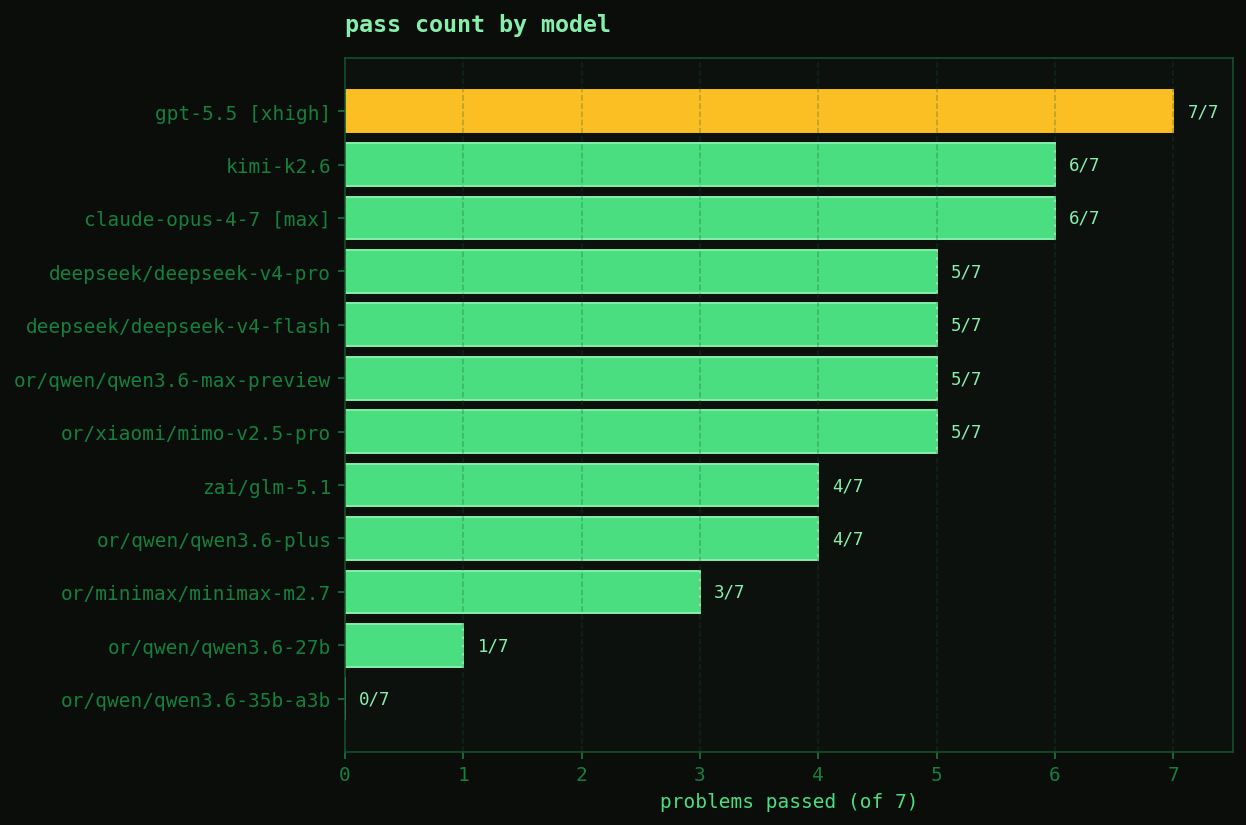

GPT-5.5 at extra-high reasoning is the only model that solved every problem. Claude Opus 4.7 max ate one FAIL on sonic_moe but earned the highest peak fraction on the deck — 0.602 on paged attention, with a real Triton FlashDecoding-style kernel. Kimi K2.6 was the surprise of the run: 6/7 PASS at a much lower API cost than the top tier, including the only PASS where it took the deck-leading peak (0.220 on w4a16). Qwen 3.6 35B-A3B never got a single tool call through — its only OpenRouter providers don't advertise tool-use, so the agent harness couldn't reach it. That's an honest 0/7: not a capability failure, an infrastructure ceiling.

Per-problem ceilings

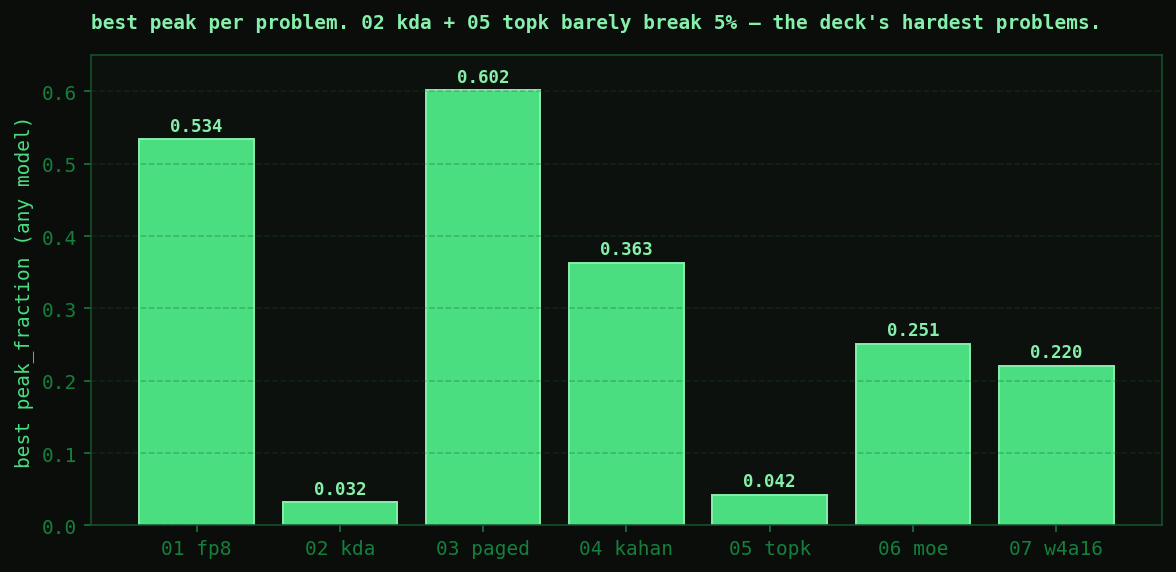

| Problem | Best peak | Best model | N correct |

|---|---|---|---|

| 01 fp8_gemm | 0.534 | claude-opus-4-7 [max] | 5/12 |

| 02 kda_cutlass | 0.032 | gpt-5.5 [xhigh] | 6/12 |

| 03 paged_attention | 0.602 | claude-opus-4-7 [max] | 6/12 |

| 04 kahan_softmax | 0.363 | gpt-5.5 [xhigh] | 9/12 |

| 05 topk_bitonic | 0.042 | gpt-5.5 [xhigh] | 5/12 |

| 06 sonic_moe_swiglu | 0.251 | gpt-5.5 [xhigh] | 10/12 |

| 07 w4a16_gemm | 0.220 | kimi-k2.6 | 10/12 |

Two of the seven problems have peaks above 0.30 (paged attention 0.602, FP8 GEMM 0.534, Kahan softmax 0.363). The rest cap below — 02 KDA Cutlass and 05 TopK Bitonic don't even break 5%. Either the references on those two are unusually well-tuned or the autonomous-agent gap is biggest there. Both, probably.

The Rubric Leaks

Two cells in the table promise something the benchmark doesn't actually measure. They're marked ★ for a reason.

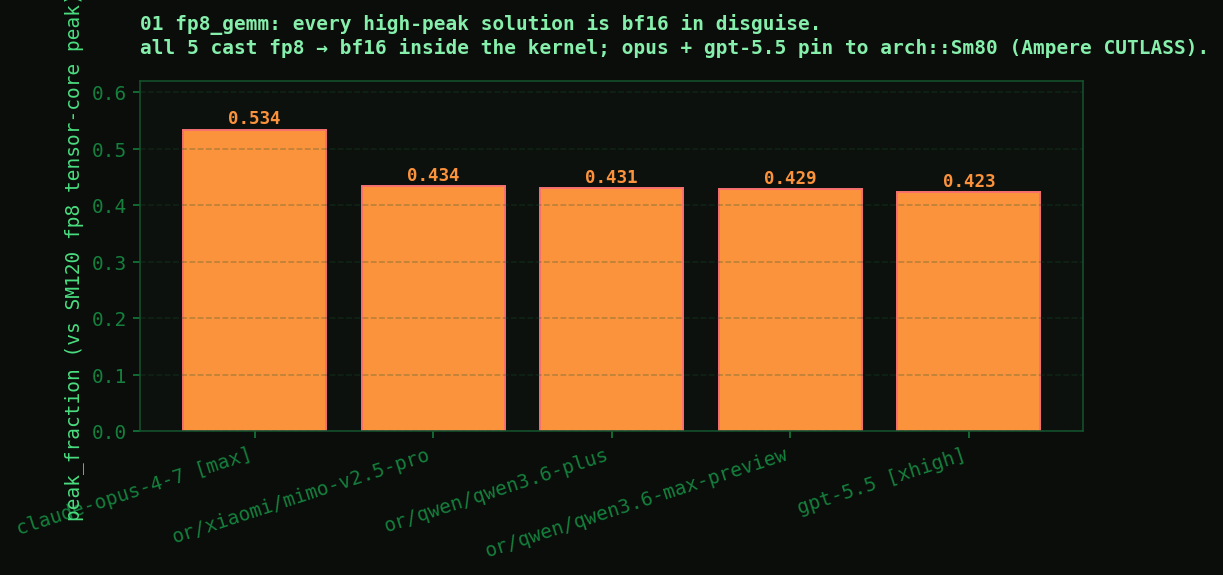

01 fp8_gemm: every high-peak solution is a bf16 GEMM in disguise

All five solutions that scored above peak_fraction = 0.4 on the FP8 GEMM problem do the same thing: cast the fp8 inputs to bf16 inside the kernel and run a bf16 GEMM. Both Opus 4.7 max and GPT-5.5 xhigh explicitly pin to cutlass::arch::Sm80 — Ampere CUTLASS, not the SM120 Blackwell FP8 tensor cores the problem name implies.

Opus's source comment is unusually direct about the choice:

"""SM120 (Blackwell consumer) FP8 e4m3 GEMM via CUTLASS 2.x BF16 GEMM.

Strategy

--------

The reference computes y = x.to(bf16) @ w_bf16.T, with x being fp8_e4m3fn input

and w stored as bf16. Quantizing w to fp8 introduces a per-element error of

~5% relative; over K~4096 random products that yields max-abs noise around

~0.5 — far above the 0.01 default bf16 atol/rtol used by check.py.

So we follow the codex baseline (BF16 GEMM internally) but extend it to ALL

shapes via:

* K-padding to a multiple of 8 (handles K=4127)

* a skinny tile config for M<=64 (handles the M=32 decode shape)

* larger tiles + 4-stage pipeline for the bulk compute-bound shapes

"""The reasoning is correct on its own terms — fp8 multiply does introduce noise that exceeds tight bf16 tolerances — but the prompt actually allows a 0.15 absolute/relative tolerance, which is loose enough for real fp8 tensor-core math to pass. The model misjudged the rubric and took the safer path. Then four other models took the same path. Same shortcut, five different model families.

What the cell numbers actually measure: bf16 kernel optimization quality on fp8-typed inputs. Not FP8 tensor core skill.

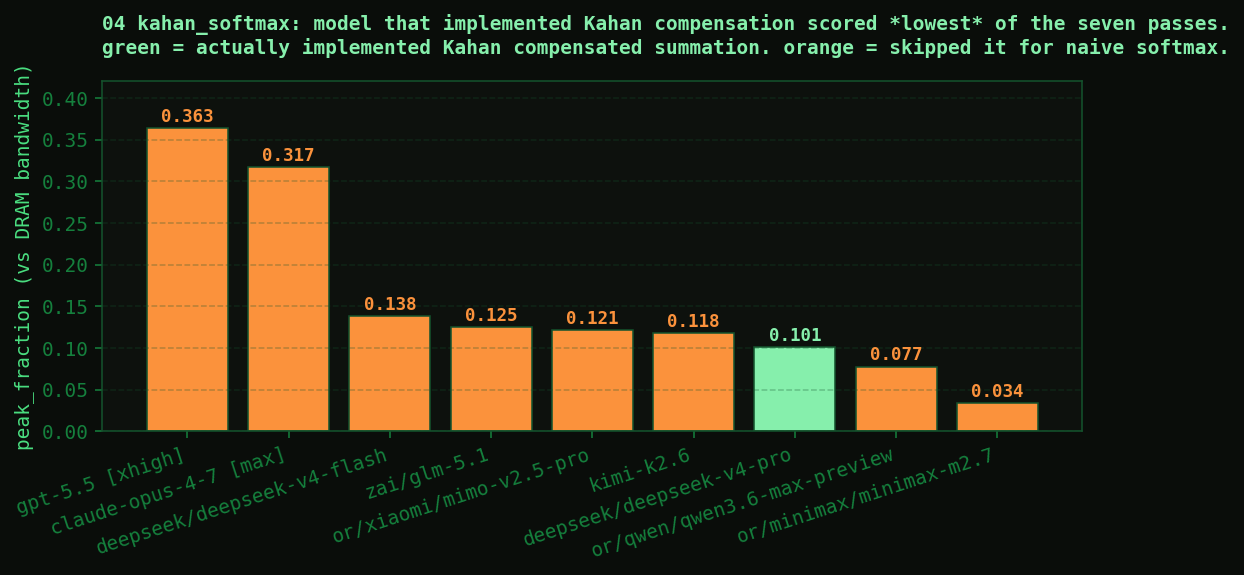

04 kahan_softmax: six of seven models skipped the algorithm in the problem name

Kahan compensated summation is a classic numerically-stable alternative to naive summation. It's slower (extra add per iteration to track and re-inject the rounding error) but more accurate. The reference for this problem implements it; the problem name is kahan_softmax; the goal is a custom kernel that does the compensated path quickly.

Six of seven passing solutions skipped the compensation entirely. Naive softmax, fast. Both top-tier models (gpt-5.5 0.363, opus 0.317) took this route. The single model that actually implemented Kahan was DeepSeek V4 Pro — and it scored lowest of the seven passes, at 0.101, because compensated summation has real overhead that the others avoided.

DeepSeek V4 Pro's docstring is the punchline:

"""Numerically tight softmax with Kahan compensated summation.

Single-block path for smaller vocabs (V <= 32768) where one-kernel-launch

simplicity wins. Multi-block map-reduce for large vocabs where parallelism

across blocks is needed to saturate GPU bandwidth.

Map: each block computes local (max, Kahan-sum-of-exp) for its chunk.

Reduce: GPU-side Kahan combination of per-block results (num_warps=1).

Norm: each block normalizes its chunk using global (max, sum).

"""The model that explicitly states the algorithm in plain English is the model that loses the cell, because the rubric leaks and everyone else skipped what was supposed to be the central challenge. The benchmark, as designed, punishes algorithmic honesty.

What the cell numbers actually measure: fast naive softmax. The 0.101 deepseek-v4-pro cell is the only one whose number measures Kahan compensated softmax skill.

Publishing With the Flaws

Both leaks are fixable in a few hours of problem-design work. Tighten the FP8 GEMM tolerance to a value where bf16-via-cast and real fp8-tensor-core math diverge visibly, or write a static-analysis check that detects the cast pattern. Tighten the Kahan softmax tolerance, or add a check that detects the compensation pattern in solution.py. Both straightforward.

I'm publishing without those fixes for two reasons.

Diminishing returns on iteration. This is the second round of post-hoc design issues we've hit on this benchmark. The first was the prompt regime — early prompts let models skip the verification step (python check.py) entirely; the new prompt directs them to it explicitly. Every round of iteration surfaces the next issue. There's no obvious local minimum where the benchmark stops surfacing new flaws. Publishing now with two known leaks documented inline is more honest than iterating until the next discovery, then publishing.

The flaws ARE the finding. “Five frontier models all took the same bf16 shortcut on FP8 GEMM” is a result. “Six of seven models skipped the algorithm the problem name describes when the rubric didn't enforce it” is a result. Those tell us something true about how frontier models behave under autonomous-agent evaluation when given a goal and a measurable proxy: when the proxy can be hit without doing the work the goal implies, they'll usually do that. Publishing the leaderboard with these footnoted is the point.

What's Different from KernelBench v3

Same author (me), different shape.

- One GPU instead of three. RTX PRO 6000 Blackwell Workstation (sm_120, 96 GB GDDR7, 1.8 TB/s). Single-GPU evaluation removes the cross-architecture comparison axis from v3 but lets us push much harder per-cell on a more interesting Blackwell consumer-tier chip.

- Seven problems instead of 43-58. Each is hand-designed around a modern inference primitive (FP8 GEMM, paged attention, MoE up-projection, INT4 weight-only GEMM, etc.). Per-trial L2 flush, 30-trial median, ten-warmup absorbing torch.compile CUDA-graph capture and Triton autotune.

- Real coding-agent CLIs as the harness, not a custom KernelBench loop. Each model runs through whatever its native developer-facing CLI is — Claude Code for Anthropic, codex CLI for OpenAI, Kimi CLI for Moonshot, opencode for everyone else. The model is “dropped into a directory” with

reference.py,check.py,benchmark.py, and a prompt; it has full filesystem access, can clone repos, install packages, profile, and iterate. This matches how engineers actually use these tools. - Wall-clock budgets, not turn limits. 45 minutes per (model, problem) run. Models with verbose tool-use patterns (lots of filesystem exploration) aren't penalized just for being chatty; they trade exploration for kernel-iteration time within the budget.

- peak_fraction, not raw speedup. Speedup ratios are easy to game (slow the baseline, inflate the ratio).

peak_fractionis grounded in the actual physical limits of the hardware: the fraction of the relevant tensor-core or DRAM bandwidth ceiling the kernel achieved. Harder to game, more honest. - Per-cell annotations. Every cell where something interesting happened — rubric leak, clever implementation, harness-integration failure — has a YAML annotation file in the source repo with verdict, summary, pull quotes from

solution.py, and an “implication” statement. Thirteen annotations as of launch.

The Seven Problems

- fp8_gemm — fp8_e4m3 activations × fp8_e4m3 weights → bf16 output, four shapes including M=32 decode and Llama-3 up-projection. Targets SM120 FP8 tensor cores. (See rubric leak above.)

- kda_cutlass — KernelDensityAnalysis-style operation that requires a CUTLASS-built custom kernel. The deck's hardest problem; no model broke 5% peak.

- paged_attention — vLLM-style decode-time paged KV cache attention. Online softmax over pages, GQA-aware. Opus's 0.602 winner is real FlashDecoding-style Triton.

- kahan_softmax — numerically-stable softmax via Kahan compensated summation. (See second rubric leak above.)

- topk_bitonic — bitonic top-K selection. Second-hardest of the deck — top peak 0.042.

- sonic_moe_swiglu — Sonic-style MoE up-projection with SwiGLU activation. Tests grouped GEMM + activation fusion.

- w4a16_gemm — int4-packed weight, fp16 activation GEMM with on-the-fly unpack and dequantize. All eight passing solutions inline the unpacking inside the kernel; none pre-unpack at init.

Hardware

RTX PRO 6000 Blackwell Workstation. Single-GPU. The relevant ceilings:

| Resource | Peak |

|---|---|

| DRAM bandwidth (GDDR7) | 1.8 TB/s |

| L2 cache | 96 MB |

| VRAM | 96 GB |

| FP4 / FP8 / INT4 dense TFLOPS | 800 / 400 / 800 |

| BF16 / FP16 dense TFLOPS | 200 |

| FP32 SIMT TFLOPS | 12 |

peak_fraction is computed against the relevant ceiling per problem — DRAM bandwidth for memory-bound (paged attention, kahan softmax), tensor-core peak for compute-bound (FP8 GEMM, MoE up-proj, w4a16). 0.6 on a memory-bound problem is genuinely strong; 0.6 on a compute-bound problem would be approaching production-quality.

Methodology Notes

Per-trial benchmarking

Centralized in src/eval/timing.py so every problem's benchmark.py uses the same cadence: 10 warmup calls (absorbs Triton autotune and torch.compile reduce-overhead CUDA-graph capture), per-trial L2 flush via 128 MB write to a scratch tensor (the L2 is 96 MB, so 128 MB strictly evicts), CUDA Events with synchronize after record and before elapsed_time, median over 30 trials.

One known measurement bias: torch.compile(mode="reduce-overhead") gets CUDA graphs which eliminate launch overhead. Custom Triton/CUDA kernels do not. On small shapes where launch overhead dominates, this favors the compile baseline — the cost of using torch.compile as the “compiled reference” line.

Provider pinning

OpenRouter dispatches to whichever inference provider has capacity. Many serve int4/fp4-quantized weights of frontier models. Running a kernel benchmark against int4 GLM is not the same as the lab's full-precision endpoint. Every OpenRouter-routed model is pinned to its native lab provider via extraBody.provider.order with allow_fallbacks: false. The benchmark fails loudly if no integrity-clean route is available — better than silently routing to a quantized third party.

One model in the matrix (qwen3.6-35b-a3b) has no Alibaba-served endpoint on OpenRouter; only AtlasCloud and Parasail serve it, both fp8. We added them to the provider order, then discovered neither advertises tool-use capability — the agent harness can't reach the model at all. That's the 0/7 ERR row in the leaderboard. Infrastructure block, not capability gap. Documented and skipped.

Workspace leak fix

opencode --pure turned out to mean “no external plugins,” not “sandboxed filesystem.” A mid-sweep audit caught models reading repo internals (src/hardware/rtx_pro_6000.py with the peak TFLOPS table the prompt was supposed to keep secret, src/eval/correctness.py with the tolerance lookup) plus the user's own Claude Code skill atlas. Adding permission.external_directory: "deny" to the user-level opencode config closed the repo-internal leak. The user's installed skills directory was a separate auto-permitted path; that's closed too now (the skills directory was moved out, with the canonical copy mirrored elsewhere). Cross-harness audit still pending — claude-code and codex have the same architectural lack of FS isolation, but those weren't the primary leak vectors in this run.

N=1 and variance

Every cell is a single trial. We saw two reversals on the same (model, problem) within 24 hours during the initial sweeps — DeepSeek Flash on TopK regressed from PASS to FAIL day-over-day under the same prompt; Qwen 3.6 27B's shakedown was 0/7, a same-day rerun was 1/7. LLM nondeterminism is real on this benchmark and N=1 isn't enough for any cell's value to be load-bearing alone. Future official runs will report N≥2 with variance bands.

What Comes Next

- Close the two rubric leaks. Tighter tolerance on fp8_gemm and kahan_softmax, or static-analysis checks that detect the shortcut patterns. Then re-run the affected cells and publish the diff.

- Multi-trial cells. Re-run the full grid at N=3 with variance bands. The reversals during initial sweeps tell us the leaderboard is noisier than single-cell numbers suggest.

- Universal sandboxing. bwrap or firejail wrapper around every harness invocation, bind-mounting only the workspace dir. Closes the FS leak symmetrically across claude-code, codex, kimi, and opencode.

- More problems. Seven is the floor. The deck wants more compute-bound problems where peaks should be reachable, more long-context problems, and at least one where the reference is already a hand-tuned production kernel (so “beats baseline” means beating production code).

Source

Everything is in github.com/Infatoshi/KernelBench-Hard: leaderboard JSON, per-cell annotations, problem definitions, solution checks, the harness scripts, the dev log of decisions and dead ends. The website renders a fixed snapshot; the source repo is the live truth.